This post is also available in:

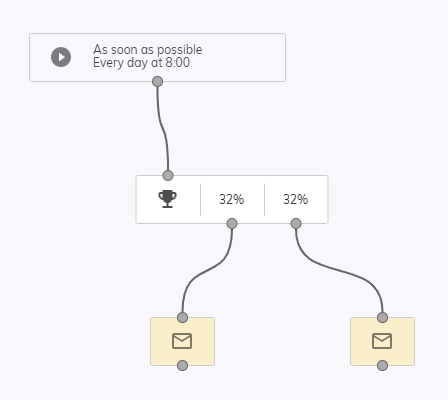

Automatic A/B testing allows you to test more variants of the same campaign simultaneously before sending the winning variant to the rest of the audience. The winning variant, which is evaluated after specified timeframe using specified metrics, will be used in the following runs of the Flow campaign.

How to set up the Automatic A/B testing?

First, set up the variations you’ll be testing. Each of the customers belonging to the test group will receive one of the variants. The rest of the database will automatically receive the winning variant after evaluating the test.

Right now, the only action you can use as a follow-up, is Email.

Variations

- It can contain up to 10 variants at most

- Each variant should be only different in one specific tested attribute (for example in the subject of an email for Open Rate testing), all other settings of the “Email” action should be the same. Thanks to this, you will be able to easily determine, which attribute has the most influence on the specified metrics.

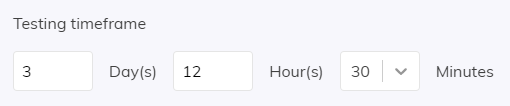

Testing Timeframe:

- Time period, in which the winner (based on the specified metrics) is determined.

- Timeframe starts counting from the moment a customer is added to the Competitors audience

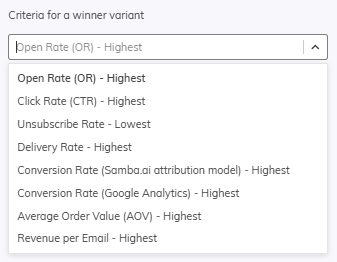

Types of evaluation metrics and its examples

- Delivery rate: For email delivery testing

- Open rate: For testing subjects of emails

- Click rate: For template and click-throughs testing

- Unsubscribe rate: For unsubscripton testing

- Conversion rate / AOV / Revenue per email: For conversions testing

We can see that there are two options for Conversion Rate. Samba.ai attribution model and Google Analytics. Here, we recommend choosing based on which attribution model you have set up on your profile (Eshop Settings > Integrations > Conversion Tracking).

- If you have Google Analytics as your Conversion Analysis Method, we recommend that you select Conversion Rate (Google Analytics) in A/B testing as well.

- If, on the other hand, you use the Samba.ai attribution model, we recommend that you select the Conversion Rate (Samba.ai attribution model) option in A/B testing as well.

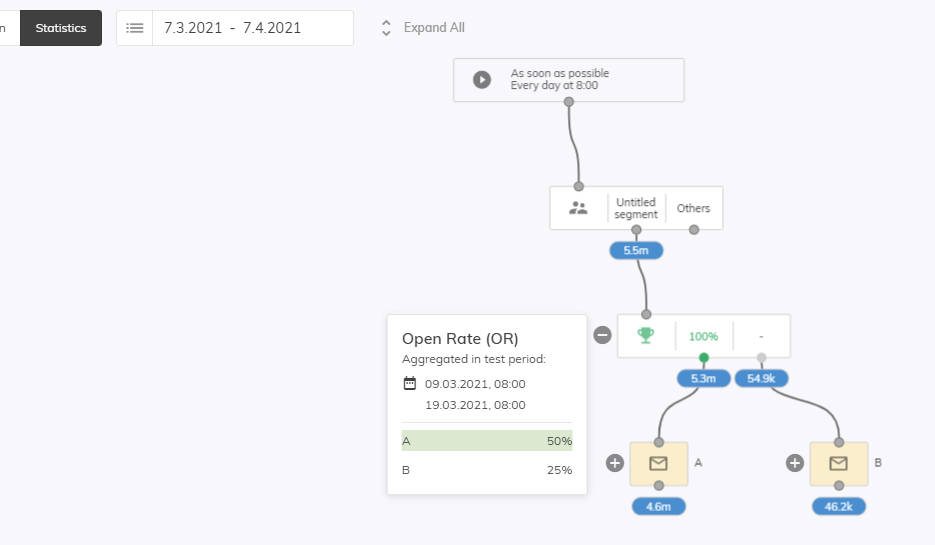

Evaluating the Automatic A/B test

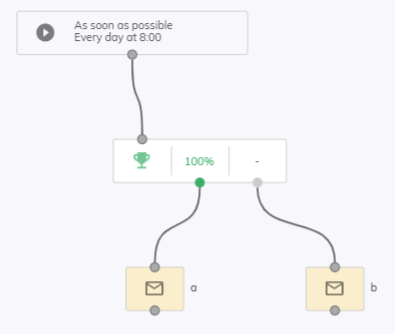

The winning variant is selected based on the specific metric in the testing timeframe. If the value is equal between multiple variants, random variant will be selected.

As soon as the evaluation is done, the winning variant will be sent immediately (to the winner audience from previous cycles/Flow campaign runs). All follow-up runs of the Flow campaign will include 100% of the whole audience.

The results are showing up data for the whole timeframe of the test – you cannot use date picker to set a specific date here.

Advanced settings for the A/B testing automation

- Changes in the automatic A/B test settings, while the test is still active:

- Instant evaluation

- If you set the time frame, which has already passed, you will be notified about this. If you still save the campaign, an immediate evaluation of the automatic A/B test will be performed.

- Test reset

- If you want to re-evaluate the whole test (and drop all gathered results), you can use the Test reset.

- Information about winning variants, time of evaluation and time of the beginning of the test will be deleted.

- This does not have to be used if you are only trying to change the evaluation metrics, timeframe or you just want to add another variant to the test. In that case all previously gathered data will be considered.

- Manual variant refuse

- If you want to refuse the result of a specific variant prematurely, you need to remove it completely. Only disabling the Email action will not suffice, because such variant can still win the test.

- Instant evaluation